About

Peter Dijkstra studied Game Design & Development at the Utrecht University of the Arts, graduating with Honours in 2016. His game development experience however started before graduation, with the much buzz generating project The Flock – winning a Dutch Game Award in the process.

During this time he also developed his own realtime VJ'ing toolset, and took it live to venues such as the Paradiso in Amsterdam and the A MAZE Festival in Berlin.

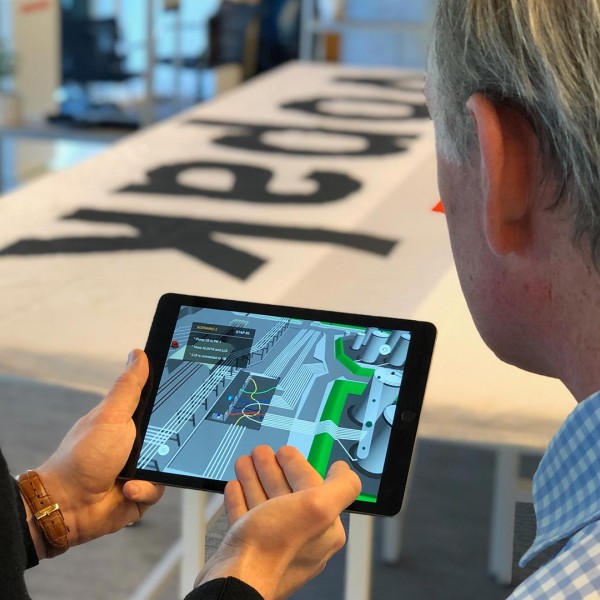

Peter can best be described as having strong development skills combined with a sharp sense of design for aspects such as UX and visual, specialized in augmented reality and video game development. He is currently based in Amsterdam in the Netherlands.

Skills include: Unity, C#, JavaScript, TypeScript, three.js, Babylon.js, music visualization, AR, VR, game design and development

Feel free to email Peter: peter at crossup dot tech